This year marks 70 years since the Dartmouth Conference of 1956: the birthplace of artificial intelligence as a field. Seven decades later, we're still hearing the same promises: AI will transform everything, revolutionize business, and deliver unprecedented ROI.

The difference? Some of it's actually true now. But not for the reasons most people think.

The Dartmouth Dream & The First Hype Cycle

In the summer of 1956, John McCarthy, Marvin Minsky, and a small group of researchers gathered at Dartmouth College with an audacious goal: create machines that could think. They believed they could crack it in a single summer.

They were wrong. By decades.

That first hype cycle set the pattern. Grand promises. Disappointing timelines. Funding cuts. The first "AI winter" hit in the 1970s when governments pulled funding after researchers couldn't deliver on their predictions. It happened again in the late 1980s when expert systems failed to scale beyond narrow use cases.

The genius of these early failures? They forced the field to get serious about statistics.

Why Hype Keeps Returning (And Why It Keeps Failing)

Fast forward to 2026, and we're experiencing what might be the biggest AI hype cycle yet. OpenAI's Sam Altman compared GPT-5 development to the Manhattan Project. Users responded with… disappointment. Anthropic's CEO predicted AI would write 90% of developer code within six months. Google's actual adoption? Just over 30%.

The pattern is obvious: overpromising by industry leaders creates unrealistic timelines. The gap between prediction and delivery erodes credibility.

Here's what's different this time, however. The underlying statistical methods have actually matured. Machine learning models today rest on solid mathematical foundations: Bayesian inference, gradient descent optimization, probabilistic graphical models. These aren't magic. They're statistics at scale.

The problem isn't the technology. It's that most business leaders are chasing the hype instead of understanding the statistical reality.

The Statistical Evolution Nobody Talks About

While headlines scream about AGI and sentient machines, the real revolution has been far more mundane and far more valuable. Over 70 years, AI evolved from symbolic logic systems to pattern recognition machines grounded in probability theory.

The shift happened in stages:

1950s-1960s: Rule-based systems. Researchers tried to hard-code intelligence. It didn't scale.

1980s-1990s: Expert systems and early neural networks. Better, but brittle. They broke when faced with real-world variability.

2000s-2010s: Statistical machine learning takes center stage. Support vector machines, random forests, gradient boosting: all rooted in statistical theory.

2020s: Deep learning and large language models. Still fundamentally statistical, just with more parameters and more data.

The throughline? Data beat the hype. Every single time.

Today's AI successes aren't magic: they're the result of carefully applied statistics, feature engineering, and data preparation. A 2023 MIT study showed that only 5% of companies convert AI technology into actual revenue. Why? Because they focused on the tool instead of the statistical foundation underneath.

What Business Leaders Miss (And What It Costs Them)

Most executives approach AI with a simple question: "What's the latest tool we should buy?"

Wrong question.

The right question is: "Do we have the statistical infrastructure to make AI work?"

Here's what that actually means. AI models require clean, structured data. They need feature engineering: the process of transforming raw data into formats models can learn from. They need validation frameworks to ensure outputs are reliable. They need integration with existing systems, not just deployment in isolation.

A point I would like to make: the disconnect between AI capability and business implementation isn't a technology problem. It's a statistical maturity problem.

Companies that succeed with AI treat it as critical infrastructure requiring alignment, iteration, and resilience. They don't chase hype cycles. They build statistical foundations first.

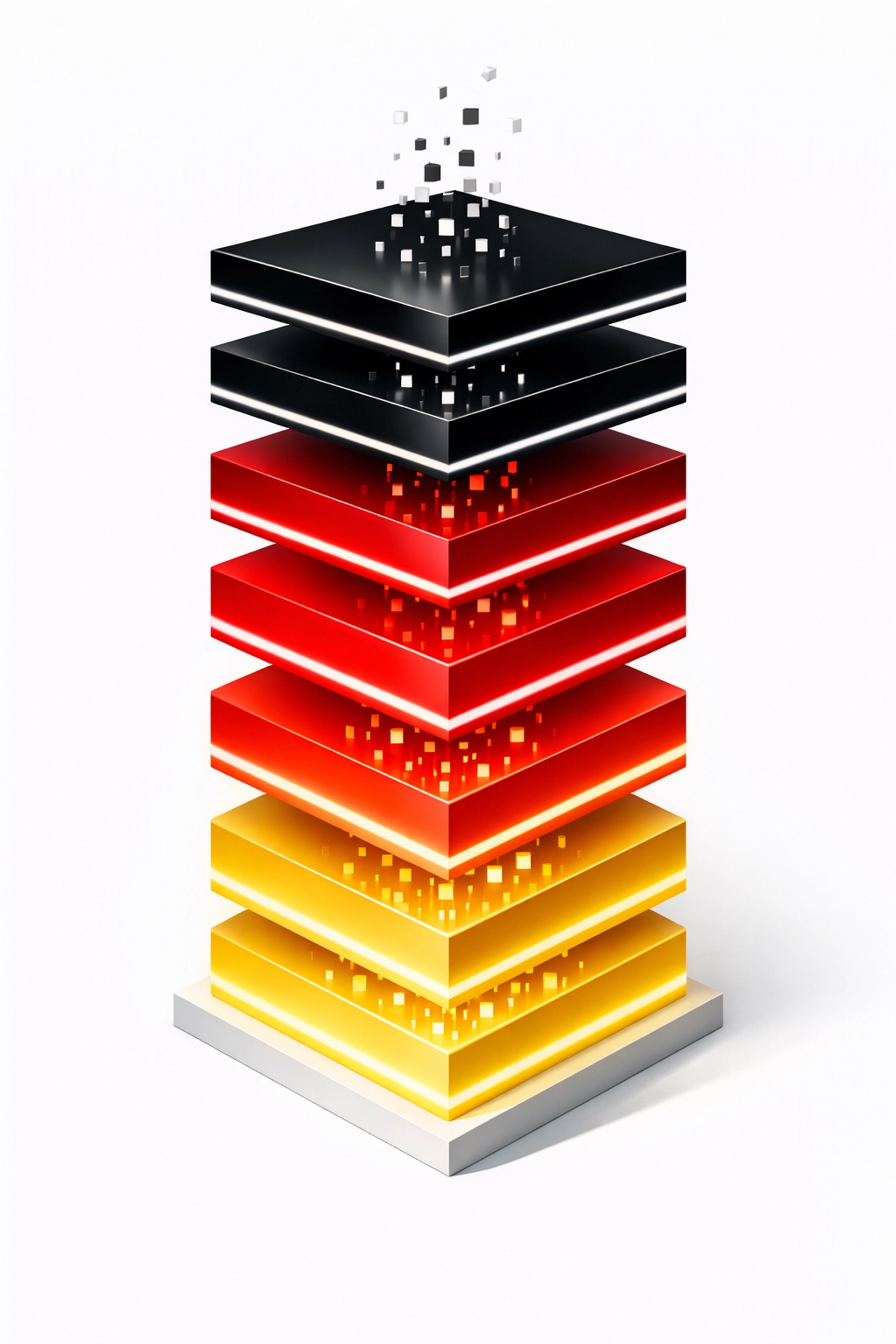

The Nine Level Framework: From Data to Decisions

At Marketways, we've spent years helping UAE and GCC organizations navigate this gap. Our approach is grounded in what we call the Nine Level Framework: a systematic method for converting raw data into actionable business insights.

The framework starts where most AI projects should start but don't: with the data itself.

Level 1-3 focus on data collection, cleaning, and validation. Unglamorous work. Absolutely essential. Without clean data, your AI model is just expensive randomness.

Level 4-6 address feature engineering, model selection, and validation. This is where statistical expertise separates success from failure. Choosing the right algorithm isn't about what's trending: it's about what matches your data structure and business problem.

Level 7-9 cover deployment, monitoring, and continuous improvement. AI models drift over time. Markets change. Customer behavior shifts. A model deployed in January might be useless by June without proper monitoring and retraining.

The genius of this framework? It acknowledges that AI is an ongoing statistical process, not a one-time technology purchase.

We apply this when helping clients frame their AI roadmap and focus on data insights rather than chasing the latest headline.

Building an AI Roadmap That Survives the Hype Cycle

So what does this 70-year perspective actually mean for your AI strategy in 2026?

First, acknowledge that hype cycles will continue. Every few years, a new technique will be proclaimed as the "breakthrough." Some will deliver. Most won't. Your roadmap should be resilient to these fluctuations.

Second, invest in statistical fundamentals before investing in AI tools. Do you have data scientists who understand probability theory? Can your team perform exploratory data analysis? Do you have processes for feature engineering and model validation? If not, buying the latest AI platform won't help.

Third, start small and prove value. The companies generating ROI from AI aren't the ones deploying enterprise-wide systems on day one. They're running controlled experiments, measuring outcomes, and scaling what works.

A recent client in the retail sector wanted to implement AI-driven customer segmentation. Instead of deploying a massive system, we started with a single product line. We built the statistical foundation, validated the approach, measured the lift in conversion rates, and then scaled. That's how you convert AI from expense to revenue.

Fourth, understand that AI excels in strictly controlled contexts but struggles in high-stakes, regulated environments. The technology hasn't changed this reality: it's a fundamental limitation of probabilistic systems. Your roadmap should account for this, not ignore it.

Finally, plan for continuous learning. AI isn't a project with an end date. It's an ongoing statistical discipline. Your team needs to evolve as methods improve and data landscapes shift.

The Next 70 Years Start Today

Here's the uncomfortable truth: most of the AI hype you're hearing today will look as quaint in 2096 as the Dartmouth predictions look now. But the statistical methods underlying successful AI implementations? Those will compound and improve.

Business leaders who understand this distinction: who can separate marketing noise from mathematical reality: will build sustainable competitive advantages. Those who chase hype cycles will burn budgets and erode stakeholder trust.

The 70-year evolution of AI teaches us one clear lesson: data beats hype. Statistics beat slogans. Rigorous implementation beats magical thinking.

At Marketways, we've seen this play out across industries and regions. The companies that succeed aren't the ones with the biggest AI budgets or the flashiest vendor partnerships. They're the ones that build statistical foundations, validate ruthlessly, and scale thoughtfully.

Seventy years from the Dartmouth dream, we finally have the tools to deliver on some of those early promises. But only if we approach them with statistical discipline instead of hype-driven enthusiasm.

Your AI roadmap shouldn't be built on what's trending. It should be built on what's proven. And after 70 years of evolution, we know exactly what that is: clean data, rigorous statistics, and systematic implementation.

The question isn't whether AI will transform your business. It's whether you'll build the statistical foundation required to make that transformation real.

Need help separating AI hype from statistical reality in your organization? Let's talk about building an AI roadmap grounded in 70 years of hard-won lessons.